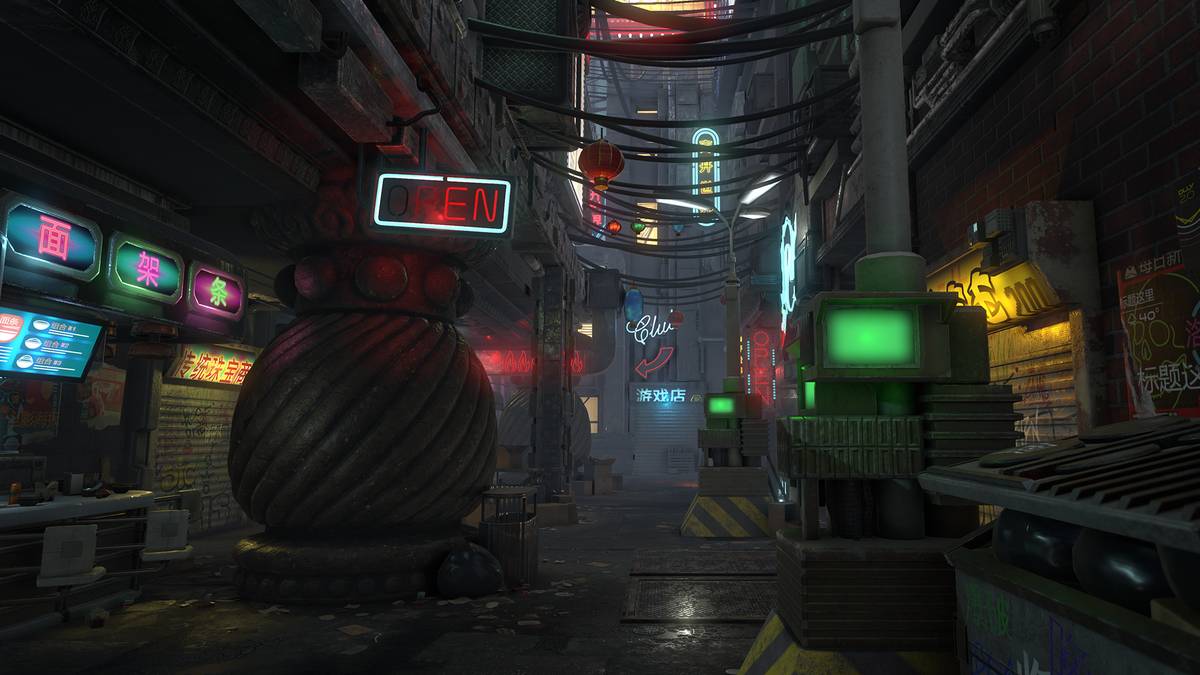

Recently released by Seismic Games and Alcon Media Group, Blade Runner: Revelations is an enthralling interactive story and full VR game based on the iconic Blade Runner universe, set shortly after the original film and leading up to the narrative of Blade Runner 2049. As the last of the Nexus Six Replicants die off and the Replicant Underground Resistance goes on the offense, the city falls into a state of unrest.

Players will assume the role of “Harper”, a seasoned blade runner who unravels a twisted replicant plot that threatens the delicate balance of Los Angeles in 2023. From the moment players put on their VR headset, they will be instantly transported to the alluring neo-noir city of Los Angeles, where they will get to choose their fate in a multi-branching story experience.

With Blade Runner: Revelations’ narrative exploring themes of being human in a world where technology and biology have become indistinguishable, there are many key elements that have to come together to make this universe work – one of those elements being the sound design & effects which were produced by the team at Hexany Audio, a Los Angeles based game audio company.

Can you imagine playing this game on mute? Most players don’t realize how much goes into making these gaming worlds feel authentic, especially when it is a virtual reality experience, so we decided to speak with Richard Ludlow and Jason Walsh about this process on Blade Runner: Revelations and some of their other titles too.

Walsh is one of the sound designers at Hexanym and Ludlow is the audio director and owner of the company. Some of Hexany’s previous credits include Tencent’s Arena of Valor, H1Z1 by Daybreak Game Company, the King’s Quest series published by Activision, Into the Stars, the Assassin’s Creed Syndicate release trailer and Moonlight Blade to name a few.

Because the Lenovo Mirage Solo is the first-ever standalone Daydream headset, was it hard to test what the sound design would be like on it? What was that process like? A lot of trial and error?

RICHARD: Great question! Because this project was cross-platform, meaning it supported both the Mirage Solo and also a variety of phones that are Daydream compatible, we had to ensure we were supporting a variety of devices in terms of performance and more relevant for us, a wide variety of listening devices.

With VR it’s imperative that players are wearing headphones, and even that can be an educational process. You’d be surprised by how many people will try and play VR games on Daydream with just the built in speaker, which obviously makes any type of spatialized audio irrelevant.

So while we absolutely did a lot of device-specific testing on Android devices like the Pixel, we actually didn’t do a ton of testing on the Mirage Solo specifically since it has no built-in headphones and we knew that we had to support an array of devices. Testing on the Solo was more relevant for engineering and those working in the visual spectrum, so most of our work was done on the Pixel. Now had the Mirage Solo been released with built-in headphones, this would have been a very different story.

How closely did you work with the game’s composer, Sean Beeson, on this project?Richard: There were actually several composers for the music on this project. Each brought a unique take on the Blade Runner universe and it was great to implement their music in the experience. Various scenes had moments that required escalation in tension based on game parameters, so in these instances we absolutely were going back and forth with the composer and music director to figure out the best approach for them to write and export elements so we could implement it appropriately.

Staying true to the Blade Runner universe though, the game is pretty cinematic, which meant a lot of linear moments. This meant important moments could be specifically scored to highlight the drama of the moment very accurately.

Does the genre of the project play into your creative process at all?

JASON: Absolutely. I take whatever time is allowed to explore the mood and aesthetic that is communicated to us at the start of the project. For a franchise that has a rich history like Blade Runner, it meant watching the movies and deconstructing sounds from certain scenes to establish the types of sonic characteristics that make the Blade Runner universe feel the way it does. Since this is also a game for VR, it means creating sound that helps players focus on what’s important while making sure it’s not distracting from the immersion of a VR experience. I find it easiest to accomplish this by starting broad with my sound design to make sure every sound that follows fits in to that established mood.

We heard you got to use some of the stems from the original Blade Runner in this game. Can you tell us which ones in particular?

JASON: Alcon was awesome for sharing the Foley sessions from Blade Runner 2049 with us. Hearing isolated recordings of the kinds of materials they used for clothing movement and environment interactions was a big help in assisting my creative process for the game. It was also a great educational tool. To stay authentic to a certain kind of sound you have to go about reverse engineering it to figure out how it was made. “They used this effect here.” or “This sounds like that could have been this material.” are thoughts I often have when I’m listening to things. These sessions took a lot of that guesswork out of the process.

Is there a different approach to creating sounds for a VR game, like Blade Runner: Revelations, opposed to a traditional game?

RICHARD: Definitely. It takes a lot more time. In a traditional game you might be able to more easily separate asset design of specific sounds from the implementation or integration of them in-engine. In VR this is much more difficult since the technical integration heavily influences how we will design assets.

For example, an explosion off to the player’s right might need to be highly spatialized so a player knows where to look. While in a traditional game this might just be a 3D mono asset, in VR it could be one layer that is mono 3D run through HRTF processing like provided by Google Resonance with another more full frequency rich element also spatialized but not run through Resonance. All coupled with a quad or stereo 2D element of low frequency non-directional energy for oomph and emphasis. So yes, it’s absolutely a different approach – one that’s much more technical and much more time consuming… but also much more exciting.

JASON: I like thinking in a player’s perspective. It’s always locked in a first-person camera. Your eyes are being tricked to believe it’s real, but to be really convincing, your hearing has to be tricked as well. A lot of work goes into getting the games audio mix to feel as natural as possible, even if it’s a lot of super fictitious sounds.

You did all the sound effects for the game but you also did music implementation. Can you tell the readers what exactly that entails?

RICHARD: This is different for every project, but we actually have two great composers in-house at Hexany so it’s something we understand quite well and deal with all the time. Though our composers didn’t contribute to the score for this project, we worked closely with the music director on the project and occasionally with the composers in order to break music down into different elements so that we could trigger it dynamically. Due to the cinematic nature of Revelations, extensive interactive music systems weren’t needed, but when they are, often we will be building these systems and concepting heavily with the composer in order to make sure everyone understands the systems and how to best composer music for them. And of course composers will always come to us with great suggestions we haven’t thought of and we’ll work with them to figure out how to score a scene dynamically as they are envisioning it playing out.

What was the most difficult effect in Blade Runner: Revelations to achieve? Why?JASON: When the player flies through the city in their spinner, there’s a lot of sounds coming and going around the vehicle. We had to get creative with how all of those sound assets are placed in the game. Good portions of the city environment sounds were merged into a single audio file. This meant finessing things with pitch, speed, stereo width, reflections, and reverbs to trick the brain into hearing those sounds passing above, below or to the sides of you at the appropriate times. It was definitely a fun opportunity to get experimental!

You did the sound design for Tencent’s Arena of Valor, which has become the largest MOBA in the world. What was the most challenging character for you in that game to create sounds for?

JASON: I don’t think I could point to one particular character because there are just so many, each with their own set of challenges. The hardest aspect of designing sound for a MOBA of this scale is to make sure every hero and their additional skins have a unique sonic identity. It’s really satisfying to play a hero whose move set looks and sounds bad ass. I work to make sure I’m delivering to the standard Arena of Valor has set so other players can get that same satisfaction.

Which characters specifically did you work on in Arena of Valor?

JASON: I’ve worked on sound for Raz, Zill, Flash, Liliana, Fennik, Wisp, Nakroth, Yorn, Violet, Arum, Ormarr, Diaochan, Tulen, and a host of other heroes that are in international versions of the game or not yet released!

You are credited with Audio Implementer for Disney Infinity (Wii). Can you tell us what exactly you did for that game?

RICHARD: This was an awesome title to work on! At the time I was in-house at Heavy Iron Studios who was handling the Wii and Wii U ports for this game. They’re a great bunch of people to work with and gave me my start in the game world. Infinity was a blast to work on; the game was built for Xbox 360 / PS3 at the time, and we were tasked with moving that to Wii and Wii U. This is actually a lot more work than you might imagine, and essentially involves rebuilding the game almost entirely using existing assets.

The main goal of any port going to a less powerful system is to maintain the integrity of the original while making creative and technical decisions that allow it to run on a less powerful console. So for example, the interactive music system in Infinity was too performance intensive for the Wii and it became my job to work with the designers and cull down its complexity and actually rebuild the entire thing in scripts that were more performant. We couldn’t maintain each element of interactivity, but again it’s about trying to maintain the overall vision of the original.

You worked on the reboot of Activison’s King’s Quest. What was your process of deciding what you were going to update, while keeping the original sound of the game?

RICHARD: Another great project to work on! This was such a rare opportunity as we were able to resurrect an older classic franchise and help re-imagine it. A lot of thought with our lead sound designer Jacob Rhein went into discussing which sounds we would retain. The item pickup sound was the most iconic one that we retained; we re-created it entirely from scratch and enhanced it a bit, but it’s largely the same as one of the original game’s pickup sounds. Other sounds that were iconic we based off the original elements but were sure to re-imagine them for the new series while still retaining some familiarity for players who were fans of the originals.

Some of the coverage you find on Cultured Vultures contains affiliate links, which provide us with small commissions based on purchases made from visiting our site.